Keith Smith - My Blog

Life and Work BalanceMonday, February 22, 2016 - Posted by Keith A. Smith, in Journal of thoughts

This Post is private, you need to be a active susbcriber to vew this Post. Click here to Subscribe

|

|

0 Comments 0 CommentsTweet |

|

vSphere 6.0 vCenter Windows 2012 R2 with SQL Server Install GuideTuesday, September 29, 2015 - Posted by Keith A. Smith, in VMware, Microsoft

VMware vSphere 6.0 has brought a simplified deployment model where the dependency on Microsoft SQL server has been reduced. You now have the option of using the built-in vPostgre SQL provided by VMware, vPostgres on windows is limited to 20 hosts and 200 virtual machines.

vCenter System requirements

Supported Windows Operation System for vCenter 6.0 Installation:

Supported Databases for vCenter 6.0 Installation:

1. Make sure that you using static IP for your VM and you create forward and reverse DNS records on your DNS server. Also make sure that the machine is part of Windows domain. 2. Create an account in your Active Directory, this will be used on the SQL server for the vCenter database 3. Now you need to create a blank SQL database on an SQL server. 4. Once your blank database is created, you need to add the account you created in your Active Directory. Make sure to give it sysadmin for the server role.

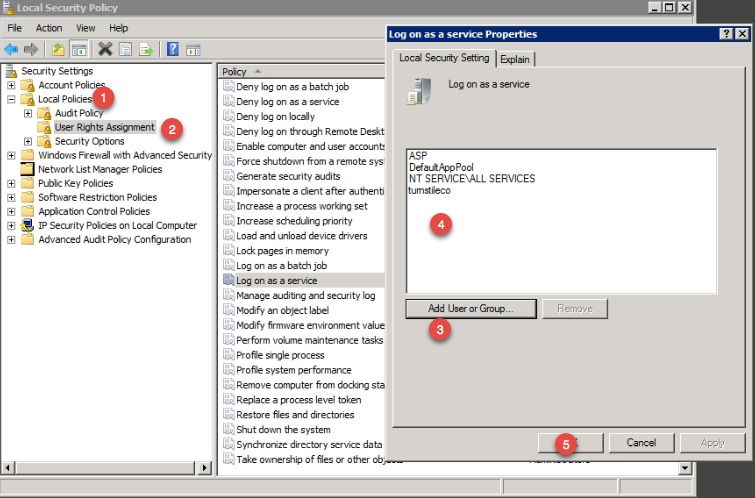

Before you start the installer make sure your Windows Server VM is fully patched, otherwise you might get a prompt to patch the server. The two patches that are needed are below 5. During the vCenter installation process you might get a prompt asking to give the administrator’s account the right to Log On as a service on the server that run vCenter. You need to grant the domain account you created earlier the right to Log On as a service. The steps:

6. Important - The SQL server native client is necessary to create the system DSN. To download the SQL Server Native Client, click on the link below This ODBC Driver for SQL Server supports x86 and x64 connections to SQL Azure Database, SQL Server 2012, SQL Server 2008 R2, SQL Server 2008, and SQL Server 2005.

http://www.microsoft.com/en-us/download/confirmation.aspx?id=36434

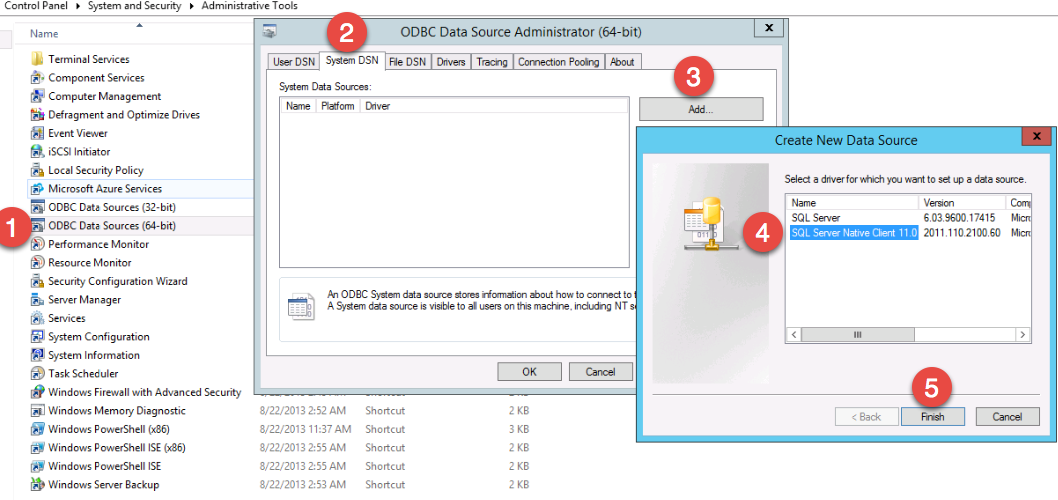

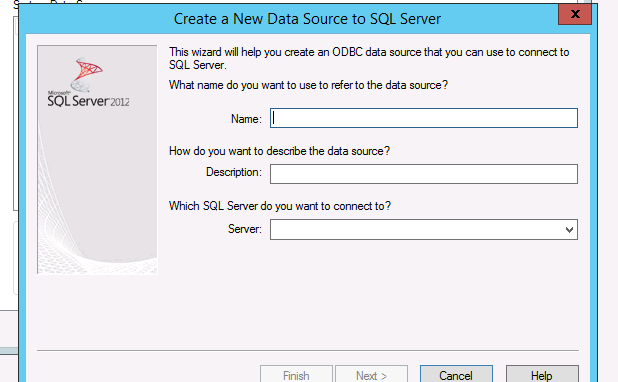

7. Now that the SQL Server Native Client is installed, create system DSN through ODBC data source administrator (64-bit).

Proceed through the wizard

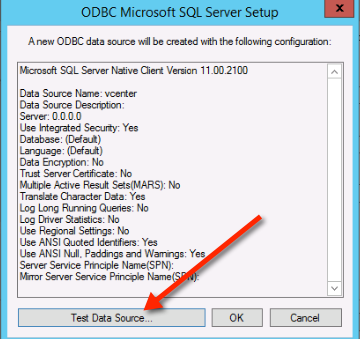

8. Once you have fill out all the SQL server information, make sure you test the data source

If you everything is setup correctly then you should see this

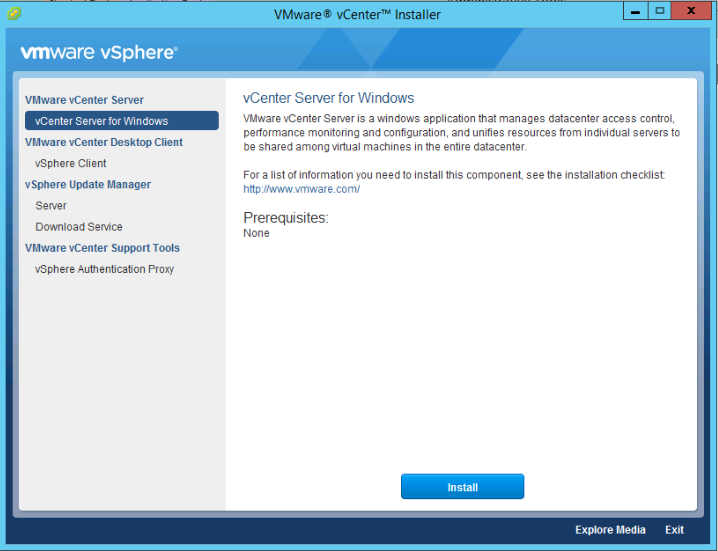

9. Mount the vCenter server ISO and double click the autorun.exe

10. Proceed through the vCenter install

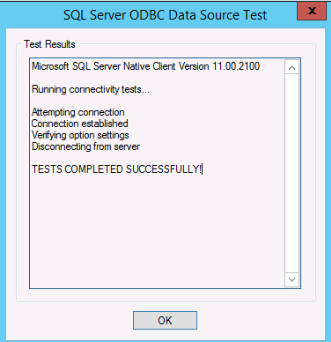

11. Once you get to the database settings, you will need to choose use an external database. If you DSN is blank then click the refresh button and it should appear

Continue the setup wizard and leave the default values… You should see the setup complete screen. At this point you can open up a browser and visit the vCenter interface which uses adobe flash.

-End |

|

0 Comments 0 CommentsTweet |

|

Apply BgInfo via Group Policy Logon ScriptMonday, September 28, 2015 - Posted by Keith A. Smith, in Microsoft

BgInfo is a well known tool that allows having a background Wallpaper showing some information about your system. This will save time when the need arises to collect details or troubleshoot your systems.

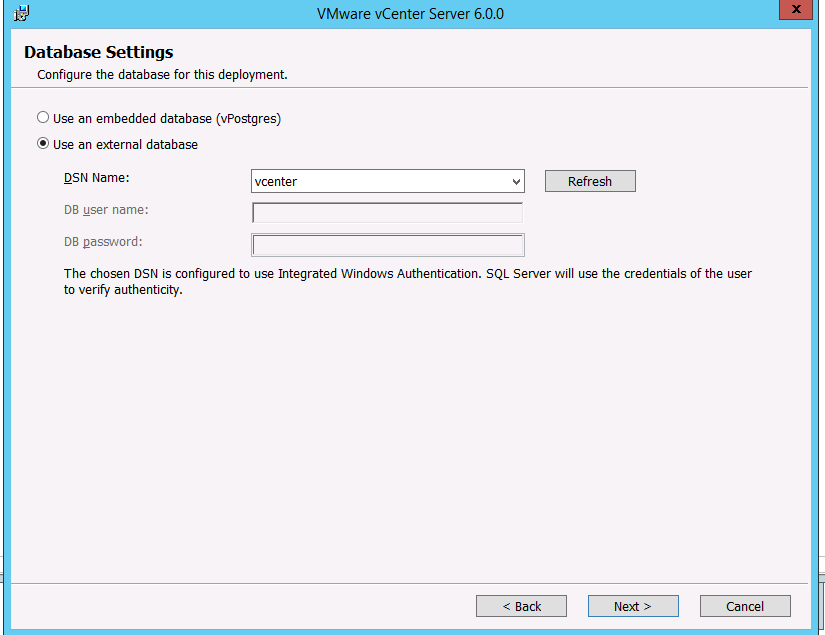

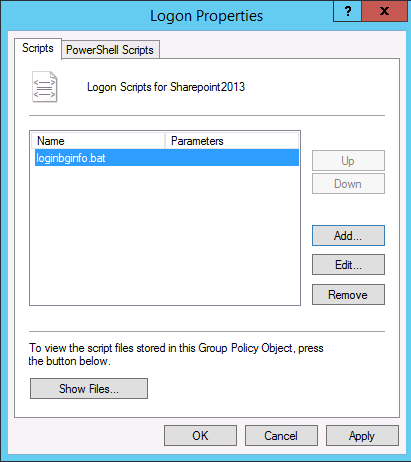

To centrally apply BgInfo I recommend the use of a Group Policy Logon script. 1. Prepare the Background Wallpaper to apply via BgInfo: You need to: • Copy the BgInfo.exe in a folder on \\yourdomain\netlogon • Run BgInfo.exe, prepare the fields to display and then save your template in a .bgi file

2. 2. Prepare a Logon Script to run BgInfo: You can use the following script to run BgInfo:

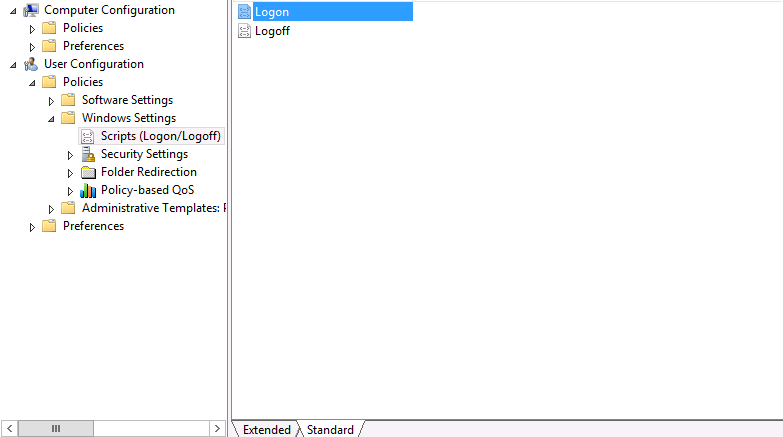

The commands above can be saved in a .bat file in Netlogon folder 3. 3. Apply BgInfo logon script using a Group Policy: To apply BgInfo logon script using a Group Policy, proceed like the following:

After that, you need to link your Group Policy to the OU containing the accounts on which it will be applied.

-End

|

|

0 Comments 0 CommentsTweet |

|

vCenter 6.0 interface sucksThursday, September 10, 2015 - Posted by Keith A. Smith, in VMware, Journal of thoughts

As mentioned here http://bit.ly/1UrCpqN, I finally made the move back to vSphere and decided to go with version 6. There has been chatter over the past few years that, the release of a great new web client was coming. Well, it finally came and honestly, they would have been better off sticking with the fat client. Why..? You may ask. Because the interface is Flash! Yes, the same Flash that should have been deprecated by now, the same Flash that has more Zero-day vulnerabilities than a tennis net has holes. I don't understand why any company would develop an interface in Flash or Java at this point. I can here some people at VMware saying if we were to develop the vSphere 6 web client in HTML5 it would take more time. I think most if not all customers would say ok take the time, because HTML5 is the best way to go point blank!

I will say this, the vCenter doesn't totally suck

Positive: 1) New platform architecture 2) Upgrade process is supposed to be a lot easier

Negatives: 1) Uses Flash — vulnerabilities, vulnerabilities, usability is terrible. 2) Uses Java — A catastrophe, Issues with every Java upgrade that are compatibility related,"security enhancements", vulnerabilities 3) Uses browsers & plugins — impacted by browser releases or changes, versions, vulnerabilities

I think that VMware needs to be more transparent about what they are doing with the replacement of this terrible Flash interface. I also think that they need to keep the TAM's informed so they can keep customers apprised of the progress.

-End |

|

1 Comments 1 CommentsTweet |

|

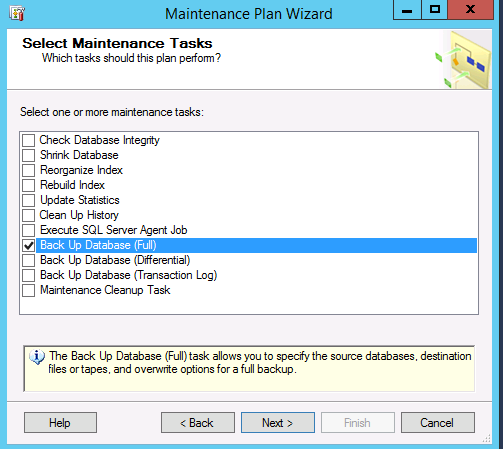

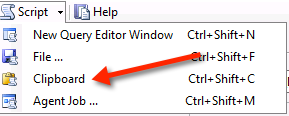

How To Restore Multiple SQL Databases At One TimeWednesday, September 9, 2015 - Posted by Keith A. Smith, in MicrosoftOne of the things I had on my to-do list was to take backups of a few SQL databases used by production systems, this way testing could be done in a separate environment. During the last Maintenance window, I got a chance to do this. The steps were: 1. Spin up a new server (perform normal S.O.P for provisioning) 2. Install SQL Server 3. On the new test SQL server, Add/create security logins (they need to match the source, which would the SQL server where the database came from) 4. On prod servers, that had multiple databases I setup a Maintenance plan that would take a full backup of the databases I selected.  I did this because it was a quick and easy way to backup multiple databases in one fell swoop. I stop the services on the application servers to halt data being written then execute the Maintenance plan. At this point, I move all the backups to the new test SQL server. I now have backups of all the databases I wanted in. bak files. I now am tasked with having to restore quite a few databases to this new SQL server. It would be great if I could do the reverse of #4 and restore multiple SQL databases at the same time, unfortunately that is not an option from within the SQL Management studio. I could have created SSIS Packages to copy the data between data sources but chose not to on this opportunity. I had an idea! If you go in the SQL Management Studio, then go through all the steps to do the SQL database restore, choose a source and destination database. Then go to the script drop down to copy the T-SQL script  which looked like this RESTORE DATABASE [DBNAME] FILE = N'DBNAME' FROM DISK = N'v:\DBNAME_backup_2015_09_09_RANDOM.bak' WITH FILE = 1, NOUNLOAD, STATS = 10 GO Where the DBNAME is that would be the database name as it would show in the SQL Management studio. Now with the T-SQL script there I wanted to get the names of all the SQL backups, for this I ran the following from a command line driveletter:\folder\dir /b>list.txt This will create the text file inside that folder. If you want the file output elsewhere, use a fully qualified name. Remember that Windows uses \ as the directory delimiter, not / Now using the list.txt file I created, I take the names of the databases and insert them in the T-SQL script where it says DBNAME. I do this for each database using some other methods to speed this part up. Once this is completed I execute the query; it took a few minutes, but results show no errors when completed. I refresh the Object Explorer, and now all the databases are attached. I was able to change a string on a test application server, and point it at the new test SQL to confirm that the application was going to work. A Restart of a few services and success! Data is present and current at least at the time of the backup.

|

|

0 Comments 0 CommentsTweet |